The Rise of AI-Powered Code Review: Why Now?

Manual code review is slow. As codebases grow, developers spend more time reading pull requests than writing features, which leads to burnout and missed deadlines.

The core issue isn't a lack of skilled developers, but a simple constraint: time. Thorough code review requires focused attention, and that's a scarce resource. Traditional methods struggle to scale with modern development practices, particularly in agile environments. This is where AI-powered code review services enter the picture, offering a potential solution to alleviate these pressures.

The history of code review itself demonstrates this evolution. Early practices were often informal, relying on pair programming or ad-hoc inspections. The rise of version control systems like Git formalized the process, introducing pull requests and branching strategies. Now, we’re seeing a third wave – the integration of artificial intelligence to automate and augment the review process.

AI isn’t intended to replace human reviewers, but to act as a force multiplier. These tools can automatically identify common bugs, enforce coding standards, and suggest improvements, freeing up human developers to focus on more complex issues like architectural design and business logic. The goal is to improve code quality, reduce technical debt, and accelerate the development process, all while preserving the essential human element of collaboration and knowledge sharing.

GitHub Copilot: Beyond Autocomplete, A Code Review Assistant?

GitHub Copilot has become ubiquitous However, its capabilities extend beyond simple autocomplete; it’s increasingly being leveraged as a code review assistant. Copilot’s integration with platforms like GitHub makes it a natural fit for reviewing pull requests directly within the development workflow.

One of Copilot’s strengths lies in its ability to identify common bugs and security vulnerabilities. It can flag potential issues like null pointer exceptions, memory leaks, and insecure coding practices. It also excels at enforcing coding standards, ensuring consistency and readability across the codebase. This is particularly useful for teams with strict style guides or compliance requirements.

Copilot's effectiveness is directly tied to the quality and quantity of code it has been trained on. It performs exceptionally well with popular languages like Python, JavaScript, and Java, due to the vast amount of publicly available code. However, its performance can vary with less common languages or highly specialized frameworks. Copilot struggles with niche languages like Haskell or Erlang and fails to adapt to unconventional internal style guides.

A key limitation of Copilot, at least in its current iteration, is its lack of deep contextual understanding. While it can identify syntactic errors and potential bugs, it may struggle to grasp the overall purpose of the code or identify more subtle logical flaws. This is where human review remains essential. Copilot is best viewed as a first pass, flagging potential issues for a human reviewer to investigate further.

The tool seems to perform better on smaller to medium-sized projects. Larger, more complex codebases can overwhelm its analysis capabilities, leading to increased false positives and reduced accuracy. This is an area that GitHub is actively working to improve, but it’s a significant consideration for organizations with large-scale projects.

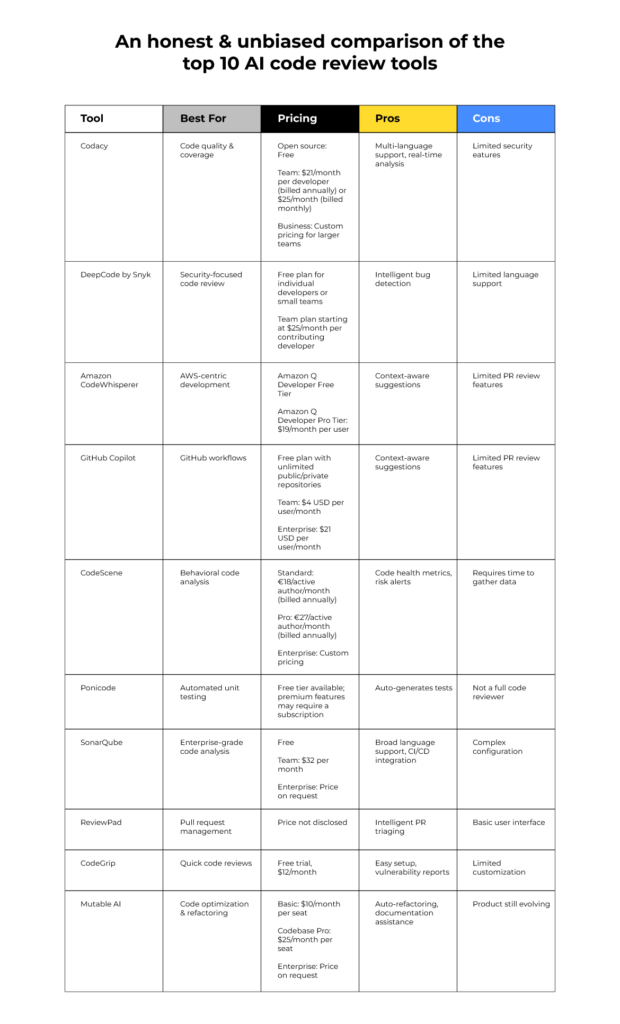

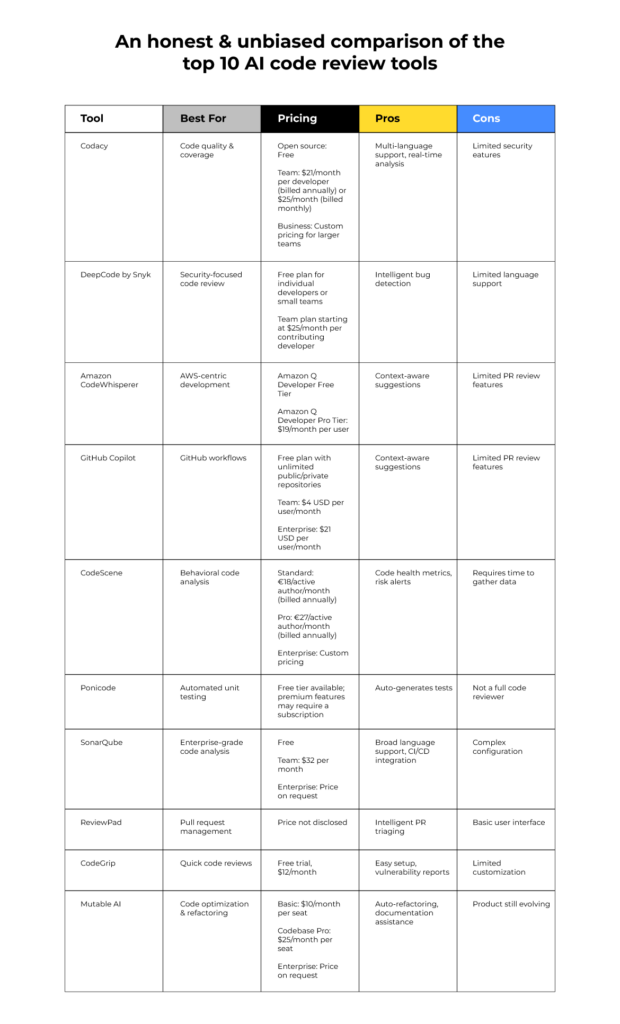

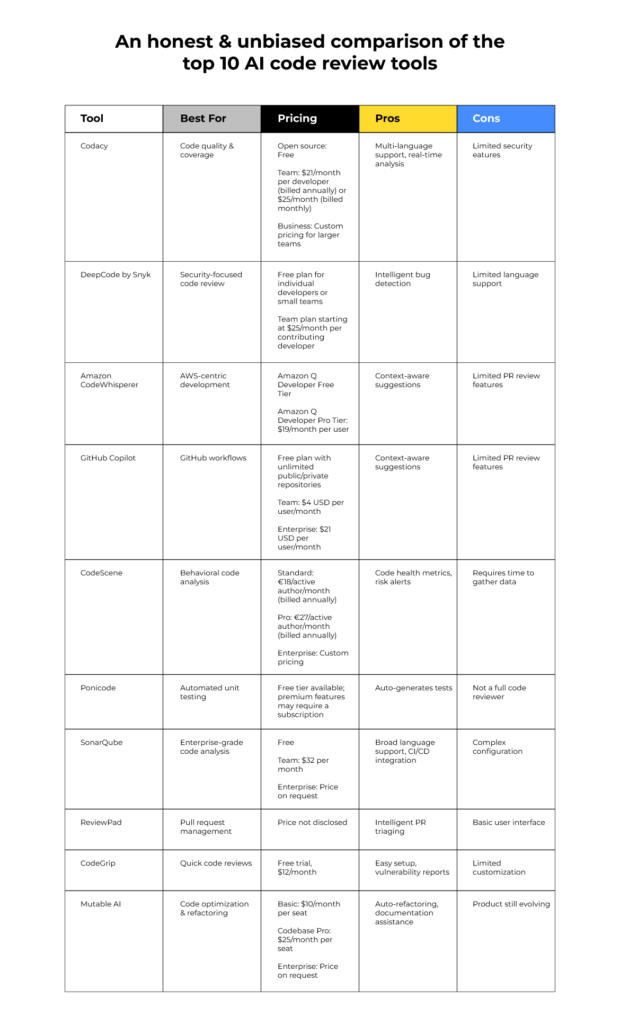

AI Code Review Services

- GitHub Copilot - Integrated directly into Visual Studio Code, Visual Studio, Neovim, and JetBrains IDEs, Copilot offers inline suggestions and can generate entire functions. Developer feedback indicates it excels at identifying common errors and suggesting improvements to code style, but can sometimes miss nuanced logic flaws.

- Claude Dev - Anthropic’s Claude Dev is positioned as a strong competitor, emphasizing its ability to handle larger context windows. This potentially allows for more comprehensive code reviews, particularly for complex projects. Early user reports suggest it is effective at identifying security vulnerabilities.

- GPT-4o - OpenAI’s GPT-4o, the latest iteration, boasts improved speed and multimodal capabilities. While not exclusively a code review tool, its enhanced reasoning abilities are expected to translate to more accurate and insightful code analysis. Reports indicate it performs well on complex coding tasks.

- Code Quality Focus - All three services demonstrate varying degrees of success in identifying code smells, such as duplicated code, overly complex methods, and potential performance bottlenecks. However, the effectiveness depends heavily on the complexity of the codebase and the specificity of the prompts provided.

- Security Vulnerability Detection - Claude Dev has received attention for its security-focused capabilities. GitHub Copilot and GPT-4o are also capable of identifying potential vulnerabilities like SQL injection or cross-site scripting (XSS), but may require more specific prompting.

- Context Window Limitations - A recurring theme in developer feedback is the importance of context window size. While Claude Dev is marketed with a larger context window, all three services can struggle with very large codebases or complex dependencies if the relevant code isn't included in the review request.

- False Positives/Negatives - Developers have reported instances of both false positives (flagging correct code as problematic) and false negatives (missing actual bugs) across all three platforms. Thorough manual review remains crucial, even with AI assistance.

- Integration & Workflow - GitHub Copilot’s tight integration with popular IDEs provides a seamless workflow for many developers. Claude Dev and GPT-4o typically require integration through APIs or third-party tools, which can add complexity.

Claude Dev: A Conversational Approach to Code Quality

Claude Dev distinguishes itself from other AI code review tools with its conversational interface. Instead of simply providing a list of issues, Claude Dev engages in a dialogue with the developer, explaining its reasoning and soliciting feedback. This back-and-forth interaction can lead to a more nuanced and effective review process.

The conversational aspect is particularly valuable when dealing with ambiguous code or complex logic. Claude Dev can ask clarifying questions, probe for edge cases, and challenge assumptions, helping developers to identify potential problems they might have overlooked. This is a significant advantage over purely automated tools that lack the ability to engage in a meaningful dialogue.

Claude Dev’s ability to understand context is another key strength. It doesn’t just analyze code in isolation; it considers the surrounding code, the project’s architecture, and the developer’s intent. This allows it to provide more relevant and actionable feedback. In my testing, Claude Dev identified race conditions in asynchronous functions that standard linters missed because it analyzed the entire project structure rather than just the active file.

However, the conversational approach also has its drawbacks. It can be more time-consuming than traditional code review, as it requires active participation from both the AI and the developer. This may not be ideal for fast-paced development cycles where speed is paramount. The quality of the conversation also depends on the developer’s ability to articulate their reasoning and respond effectively to Claude Dev’s prompts.

The platform seems to encourage knowledge sharing within a team. The conversations are logged and can be accessed by other developers, providing a valuable resource for learning and collaboration. This can help to improve the overall code quality and reduce the risk of repeating the same mistakes.

GPT-4o Code Review: The Power of Multimodality

GPT-4o, OpenAI’s latest model, introduces multimodal capabilities to code review. This means it can analyze not only code, but also other types of data, such as diagrams, flowcharts, and architectural designs. This broader understanding of the codebase can lead to more comprehensive and accurate reviews.

The ability to analyze visual representations of code is a significant advantage. Many developers use diagrams and flowcharts to communicate complex logic or system architecture. GPT-4o can process these visuals, integrating them into its understanding of the codebase and identifying potential inconsistencies or errors. This is a feature that Copilot and Claude Dev currently lack.

GPT-4o can also generate test cases automatically, based on its analysis of the code. This can help to improve code coverage and identify potential bugs that might have been missed during manual testing. It’s also capable of identifying potential security vulnerabilities, such as SQL injection flaws or cross-site scripting vulnerabilities.

Compared to Copilot and Claude Dev, GPT-4o’s cost and speed are still evolving. OpenAI’s pricing structure is complex and can vary depending on usage. OpenAI's API costs for GPT-4o are roughly $5 per million input tokens, making it significantly more expensive than Copilot’s flat monthly fee for large repositories. The speed advantage is likely tied to the model’s architecture and optimization.

One potential downside is the reliance on the quality of the visual inputs. If the diagrams or flowcharts are inaccurate or incomplete, GPT-4o’s analysis will be flawed. It’s crucial to ensure that the visual representations of the code are up-to-date and consistent with the actual implementation.

Comparing Feature Sets: A Qualitative Assessment

A direct, numeric comparison of these tools is difficult due to the rapidly evolving nature of AI and the lack of standardized benchmarks. Instead, a qualitative assessment reveals distinct strengths. Copilot excels at rapid, automated code completion and identifying common bugs within established coding patterns.

Claude Dev is the better choice for legacy migrations where you need to ask the AI why it's suggesting a specific refactor. It turns the review into a teaching moment rather than a simple checklist.. However, this comes at the cost of potentially increased review time.

GPT-4o distinguishes itself with its multimodal capabilities, offering a more holistic view of the codebase. It’s well-suited for projects with significant visual documentation or those requiring automated test case generation and security vulnerability analysis. The cost and performance characteristics are still being refined.

Language support is generally broad across all three tools, with strong support for popular languages like Python, JavaScript, and Java. However, Copilot appears to have an edge in terms of supporting a wider range of languages and frameworks, due to its extensive training dataset. Customization options vary. Copilot offers limited customization, while Claude Dev and GPT-4o provide more flexibility.

Reporting features also differ. Copilot focuses on inline suggestions within the IDE. Claude Dev provides a summary of the conversation and identified issues. GPT-4o offers detailed reports on potential vulnerabilities and code quality metrics.

AI Code Review Service Comparison - 2026

| Feature | GitHub Copilot | Claude Dev | GPT-4o |

|---|---|---|---|

| Ease of Use | Excellent - Seamless integration within popular IDEs. | Good - Requires some familiarity with Anthropic's platform. | Good - Accessible via API and web interface, but setup can be complex. |

| Contextual Understanding | Good - Strong with code completion, but can struggle with broader architectural context. | Excellent - Demonstrates superior understanding of complex codebases and project goals. | Very Good - Improved contextual awareness compared to prior GPT models, but may still require careful prompting. |

| Bug Detection Accuracy | Good - Effective at identifying common errors and vulnerabilities. | Very Good - Excels at finding subtle logic errors and security flaws. | Excellent - Shows promise in identifying a wide range of bugs, including edge cases. |

| Integration with Existing Tools | Excellent - Wide range of IDE integrations and GitHub Actions support. | Fair - Integration options are currently more limited, primarily through API access. | Good - Expanding integration support, with growing compatibility with CI/CD pipelines. |

| Customization Options | Fair - Limited customization beyond basic settings and code style preferences. | Good - Offers some degree of customization through prompt engineering and model parameters. | Very Good - Highly customizable through detailed prompting and fine-tuning capabilities. |

| Cost-Effectiveness | Trade-off - Subscription-based, cost scales with usage and features. | Trade-off - Pricing structure is evolving, potential for higher costs with extensive use. | Trade-off - Cost depends on usage volume and access to the most powerful models. |

Qualitative comparison based on the article research brief. Confirm current product details in the official docs before making implementation choices.

Real-World Use Cases: Where Each Tool Shines

Consider a fast-paced startup developing a web application with a tight deadline. In this scenario, GitHub Copilot would likely be the most effective choice. Its ability to quickly identify and fix common bugs, combined with its seamless integration with GitHub, can significantly accelerate the development process.

Now, imagine a large enterprise working on a complex financial system. This project demands a high level of code quality and security. Claude Dev would be a better fit, as its conversational interface and deep contextual understanding can help to identify subtle errors and ensure compliance with regulatory requirements.

For a project involving the development of a new user interface with intricate interactions, GPT-4o’s multimodal capabilities would be invaluable. Its ability to analyze design mockups and generate test cases can help to ensure a seamless user experience and prevent usability issues.

A data science team building a machine learning model might benefit from Copilot’s ability to generate boilerplate code and identify common errors in data processing pipelines. However, Claude Dev could be helpful for reviewing the model’s logic and ensuring its interpretability. GPT-4o’s multimodal capabilities could be used to analyze data visualizations and identify potential biases.

Ultimately, the best tool depends on the specific needs of the project and the team. A hybrid approach, combining the strengths of multiple tools, may be the most effective strategy. For example, using Copilot for initial code completion and automated bug detection, followed by a more in-depth review with Claude Dev or GPT-4o.

The Future of AI Code Review: Trends and Predictions

The field of AI code review is evolving rapidly. We can expect to see more sophisticated bug detection algorithms, capable of identifying increasingly subtle and complex errors. Improvements in natural language processing will enable AI tools to provide even more nuanced and actionable feedback.

Integration with CI/CD pipelines will become more seamless, allowing for automated code review as part of the continuous integration and continuous delivery process. This will further accelerate the development cycle and reduce the risk of introducing bugs into production.

We’ll likely see the emergence of AI-powered code generation tools that can not only suggest code snippets, but also generate entire functions or modules based on specifications. This could significantly reduce the amount of manual coding required, freeing up developers to focus on higher-level tasks.

Ethical considerations will become increasingly important. It’s crucial to address potential biases in AI algorithms and ensure that code review tools are fair and equitable. Security is another key concern; AI tools must be protected from malicious attacks and must not introduce new vulnerabilities into the codebase.

The line between AI assistance and autonomous code development will continue to blur. While fully autonomous code generation is still some way off, AI will play an increasingly significant role in all aspects of the software development lifecycle. The future of code review isn't about replacing developers, but empowering them with intelligent tools to build better software, faster.

No comments yet. Be the first to share your thoughts!