The Looming Quantum Threat: Why Migrate Cryptography Now?

Quantum computers aren't powerful enough to break RSA or ECC yet, but the risk is real. We need to act now because of 'store now, decrypt later' attacks. The primary concern is "store now, decrypt later" attacks, where malicious actors are harvesting encrypted data today with the intent of decrypting it once quantum computers become powerful enough. This makes long-lived data particularly vulnerable.

The 2026 timeframe is emerging as a critical point for developers. The National Institute of Standards and Technology (NIST) is driving the standardization of post-quantum cryptography (PQC) algorithms, with the first set of standards expected to be finalized around this time. This isn’t a hard deadline, but it’s a practical target for organizations to begin transitioning to quantum-resistant solutions. Ignoring this timeline leaves systems exposed to potential attacks as the quantum computing field matures.

NIST’s process involved evaluating numerous candidate algorithms over several years. The goal is to identify algorithms that are resistant to attacks from both classical and quantum computers. This isn’t simply about swapping out algorithms; it requires updating cryptographic libraries, protocols, and infrastructure. Developers need to understand the implications of this transition and prepare their systems accordingly. A proactive approach to PQC migration is essential for maintaining data security in the coming years.

Understanding the Post-Quantum Algorithms: A Developer’s Primer

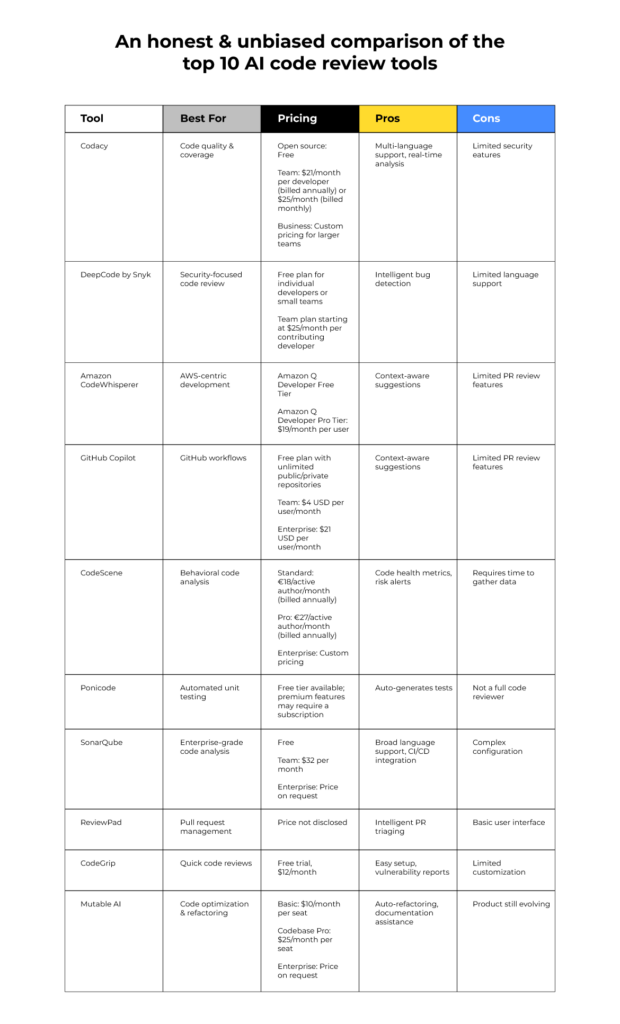

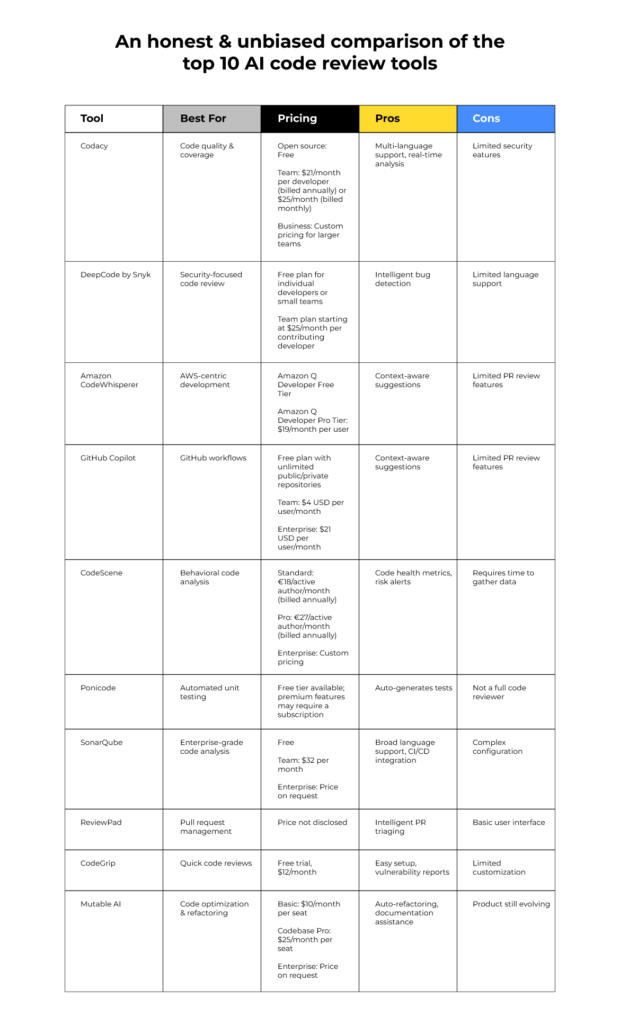

NIST has selected several algorithms for standardization, falling into two main categories: key encapsulation mechanisms (KEMs) and digital signatures. KEMs are used to securely exchange keys, while digital signatures are used to verify the authenticity and integrity of data. Developers don’t need to understand the complex mathematics behind these algorithms, but knowing what they are is crucial.

CRYSTALS-Kyber is a leading KEM candidate, known for its relatively small key sizes and good performance. CRYSTALS-Dilithium is a digital signature algorithm offering a balance between security and efficiency. FALCON is another digital signature scheme, focused on minimizing signature sizes. SPHINCS+ is a stateless hash-based signature scheme, offering a different security profile – it’s slower but provides strong security guarantees.

These algorithms are a significant departure from traditional cryptography. They rely on different mathematical problems that are believed to be resistant to quantum attacks. When integrating these algorithms, developers will encounter different parameter sets and key formats. Understanding these differences is essential for correct implementation. Libraries will abstract away much of the complexity, but awareness of the underlying algorithms is still paramount.

- CRYSTALS-Kyber: Key Encapsulation Mechanism (KEM)

- CRYSTALS-Dilithium: Digital Signature

- FALCON: Digital Signature

- SPHINCS+: Stateless Hash-Based Signature

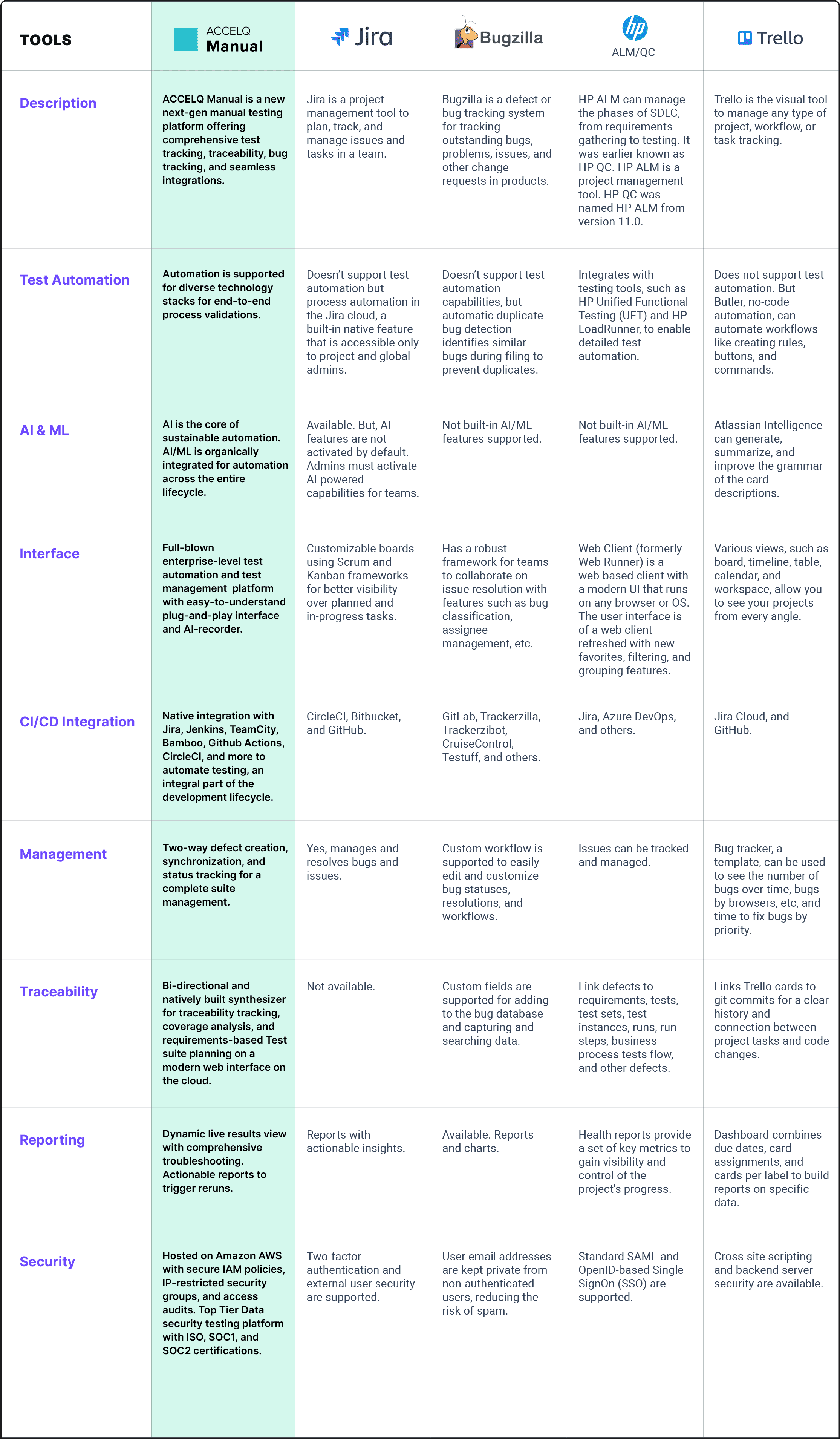

NIST Post-Quantum Cryptography Standard Algorithms - Comparative Assessment

| Algorithm Name | Type | Security Level | Performance | Implementation Complexity |

|---|---|---|---|---|

| CRYSTALS-Kyber | KEM | 5 | Fast | Moderate |

| CRYSTALS-Dilithium | Signature | 5 | Medium | Moderate |

| Falcon | Signature | 5 | Fast | Complex |

| SPHINCS+ | Signature | 5 | Slow | Simple |

| Classic McEliece | KEM | 3-5 | Slow | Complex |

| BIKE | KEM | 1-3 | Medium | Moderate |

Illustrative comparison based on the article research brief. Verify current pricing, limits, and product details in the official docs before relying on it.

Common Migration Bugs: Key Exchange Failures

Key exchange is often the first point of failure during PQC migration. Incorrect parameter handling is a common issue. Each PQC algorithm has specific parameter sets that define its security level and performance characteristics. Using the wrong parameters can lead to weak security or failed negotiations. The NCCoE documentation (2024) says to validate these parameters during integration.

Problems with key generation are also frequent. Many PQC algorithms require specific random number generators (RNGs) to ensure the security of the generated keys. Using a flawed RNG can compromise the entire system. Furthermore, some libraries might not correctly handle the key generation process, resulting in invalid keys. Silent key rejection is a particularly insidious problem, where the key exchange appears to succeed, but the generated keys are unusable.

Issues with the key exchange protocol itself can also occur. For example, if the protocol doesn’t correctly handle the larger key sizes of some PQC algorithms, it can lead to truncation or corruption of the keys. Debugging these issues often requires careful examination of the network traffic and protocol logs. Libraries like OpenSSL will be updated to support these new algorithms, but developers must ensure they are using the latest versions.

- Incorrect Parameters: Using wrong settings for the algorithm.

- Flawed RNG: Compromised key generation.

- Silent Key Rejection: Exchange appears successful, but keys are invalid.

Common Public Key Validation Vulnerability

One of the most critical security flaws in quantum-safe cryptography implementations occurs during public key validation. Many developers skip proper validation steps, creating vulnerabilities that can be exploited even before quantum attacks become prevalent. The following example demonstrates a common mistake where public keys are accepted without proper verification.

# VULNERABLE IMPLEMENTATION - DO NOT USE

class VulnerableKeyExchange:

def __init__(self):

self.public_key = None

self.private_key = None

def receive_public_key(self, received_key_data):

# SECURITY FLAW: No validation of public key format or parameters

self.public_key = received_key_data

return True

def perform_key_exchange(self, peer_public_key):

# SECURITY FLAW: Using unvalidated public key directly

shared_secret = self.calculate_shared_secret(peer_public_key)

return shared_secret

# CORRECTED IMPLEMENTATION

class SecureKeyExchange:

def __init__(self):

self.public_key = None

self.private_key = None

self.valid_key_sizes = [2048, 3072, 4096] # Example valid sizes

self.max_key_size = 8192

def receive_public_key(self, received_key_data):

# Validate key format and structure

if not self.validate_key_format(received_key_data):

raise ValueError("Invalid public key format")

# Validate key parameters and size

if not self.validate_key_parameters(received_key_data):

raise ValueError("Invalid public key parameters")

# Check for weak or malicious keys

if not self.validate_key_strength(received_key_data):

raise ValueError("Weak or potentially malicious public key")

self.public_key = received_key_data

return True

def validate_key_format(self, key_data):

# Verify key follows expected encoding (PEM, DER, etc.)

if not isinstance(key_data, (str, bytes)):

return False

# Basic format validation

if isinstance(key_data, str) and not key_data.strip():

return False

return True

def validate_key_parameters(self, key_data):

# Extract and validate key size

try:

key_size = self.extract_key_size(key_data)

if key_size not in self.valid_key_sizes:

return False

if key_size > self.max_key_size:

return False

except Exception:

return False

return True

def validate_key_strength(self, key_data):

# Check for known weak keys or attack patterns

# This is a simplified example - real implementation would be more complex

try:

# Validate key is not all zeros or other weak patterns

if self.is_weak_key_pattern(key_data):

return False

# Additional cryptographic validation would go here

return True

except Exception:

return False

def extract_key_size(self, key_data):

# Placeholder for actual key size extraction logic

# Real implementation would parse the key structure

return 2048

def is_weak_key_pattern(self, key_data):

# Placeholder for weak key detection

# Real implementation would check for known weak patterns

return False

def perform_key_exchange(self, peer_public_key):

# Only proceed if key has been validated

if not self.public_key:

raise ValueError("No validated public key available")

shared_secret = self.calculate_shared_secret(peer_public_key)

return shared_secret

def calculate_shared_secret(self, peer_key):

# Placeholder for actual key exchange calculation

return b"shared_secret_placeholder"The vulnerable implementation accepts any data as a public key without validation, making it susceptible to malformed keys, oversized keys that could cause denial-of-service attacks, or specially crafted keys designed to exploit cryptographic weaknesses. The corrected version implements multiple validation layers: format verification, parameter checking, and strength validation. This defensive approach is essential when migrating to post-quantum cryptographic algorithms, as new attack vectors may emerge that target implementation flaws rather than the underlying mathematical problems.

Debugging Digital Signature Verification Errors

Digital signature verification errors can be particularly tricky because they often manifest later in the process, making it harder to trace the root cause. A frequent issue is using the incorrect signature scheme. Different PQC algorithms offer multiple signature schemes with varying security and performance trade-offs. Selecting the wrong scheme can lead to verification failures.

Improper hashing algorithms are another common source of errors. Digital signatures rely on cryptographic hash functions to create a digest of the data being signed. If the wrong hash function is used, the signature will not verify correctly. It's important to ensure that the hash function used for signing and verification are identical. Key format issues also contribute to verification problems.

Always verify signatures with the correct parameters. This includes the algorithm identifier, the hash function, and the key parameters. Debugging these errors requires careful examination of the signature data, the key, and the verification process. Tools for analyzing cryptographic signatures can be invaluable in identifying the source of the problem. Proper logging of signature verification attempts is also essential.

- Incorrect Scheme: Selecting the wrong signature algorithm.

- Improper Hashing: Using mismatched hash functions.

- Key Format Issues: Incompatible key formats.

Troubleshooting Performance Issues in Post-Quantum Implementations

PQC algorithms are generally more computationally intensive than their classical counterparts like RSA or ECC. This can lead to performance bottlenecks, especially in high-throughput applications. Profiling is the first step in identifying these bottlenecks. Tools like perf and gprof can help pinpoint the code sections that are consuming the most CPU time.

Optimizing code for speed is crucial. This might involve using optimized libraries, leveraging hardware acceleration, or carefully tuning the algorithm parameters. There is usually a trade-off between security and performance. Aggressive optimization can sometimes weaken the security of the algorithm. Memory usage is another concern.

PQC algorithms often require more memory than classical algorithms. This can be a problem on resource-constrained devices. Developers need to carefully manage memory allocation and avoid memory leaks. Consider using techniques like memory pooling to reduce the overhead of memory allocation. Benchmarking different PQC implementations can help identify the most efficient solution for a given application.

Debugging Dapr Cryptography Issues: A Practical Guide

When working with Dapr, cryptography issues can sometimes be obscured by the runtime’s abstractions. Common error messages include failures during key exchange or signature verification. OneUptime’s documentation suggests focusing on Dapr’s logging output to understand the underlying cause. Look for error codes related to cryptography operations.

Interpreting Dapr’s logging requires understanding how it handles cryptography. Dapr uses a pluggable cryptography provider, so the specific error messages will depend on the provider being used. Check the provider's specific error logs to see where the handshake failed.ider’s documentation for details on the error codes. Strategies for isolating the root cause involve disabling PQC temporarily to see if the issue resolves.

Configuring Dapr for PQC involves specifying the desired algorithms and parameters in the Dapr configuration file. Ensure that the configuration is correct and that the selected algorithms are supported by the cryptography provider. If you're still encountering issues, consider using a network analyzer to inspect the communication between Dapr components.

Testing and Validation: Ensuring Your Migration is Secure

Thorough testing is paramount after migrating to PQC. Unit tests should verify the correct implementation of individual cryptographic operations, such as key generation, encryption, and decryption. Integration tests should ensure that the PQC algorithms work correctly within the overall system. Penetration testing is essential for identifying vulnerabilities that might have been missed.

Tools for validating PQC implementations are becoming increasingly available. NIST provides test vectors for its standardized algorithms, which can be used to verify the correctness of an implementation. Third-party libraries and services also offer validation tools. Ongoing monitoring is critical for detecting any security issues that might arise after deployment.

Regularly review and update your cryptographic configurations. As the field of PQC evolves, new algorithms and best practices will emerge. Staying up-to-date with the latest research and recommendations is essential for maintaining a secure system. Consider implementing automated security scans to detect any potential vulnerabilities. A layered approach to security is always best.

Idgi. Seems like exposing all security bugs in say, a few days, is bad. Because it will take longer than a few days to fix and now everyone knows about the vulnerability.

— ourania, elderly multigravida shikse⁷ (@ouranometrian2) April 12, 2026

The problem is the speed and scale. Am I missing something here

No comments yet. Be the first to share your thoughts!